This is a product demo and research example, not medical advice. The screenshots were captured from a live InfiniSynapse task on May 14, 2026.

A family member sent me a clinic ad the other day. It sounded safe, official, almost settled:

"FDA-approved stem cell injection for knee osteoarthritis."

The price was about $3,000.

I paused at the phrase "FDA-approved." That is the kind of phrase that changes how people behave. Without it, you might hesitate. With it, you feel as if the hard part has already been checked by someone else.

But checked by whom? Approved for what? A clinical trial, or a treatment a clinic can sell?

I could have spent the evening doing the usual thing: open a few tabs, read half of an FDA page, skim two clinic websites, get pulled into patient testimonials, and end the night less sure than when I started.

Instead, I gave the whole question to InfiniSynapse.

I asked it to use web search and browser research together, rank sources by authority, separate verified facts from marketing claims and anecdotes, and give a clear decision.

That last part matters. I was not asking for a nice summary. I was asking what I should tell someone in my family before money changed hands.

The Internet Is Getting Harder to Trust

There is a larger reason this case stayed with me.

AI has made information cheaper to produce. That sounds good at first. More explainers, more summaries, more product pages, more patient stories, more "research-backed" claims. But the result is not always clarity.

For an ordinary person, the problem is no longer that there is no information. The problem is that everything now comes wrapped in a fluent paragraph. A clinic page can sound scientific. A forum post can sound experienced. A generated article can sound balanced. A marketing claim can borrow the language of regulation and clinical research.

So the hard part has moved.

It is not just finding information. It is deciding which information deserves to stand above the rest.

That is why this InfiniSynapse example matters. The value is not that it can search more pages than I can. The value is that it can slow down, rank the sources, compare their incentives, and keep the official record from being drowned out by confident noise.

The First Good Sign: It Ignored the Ad at First

The thing I liked most happened before any conclusion appeared.

InfiniSynapse did not start with the clinic page. It did not start with the most emotional patient story. It did not start from a blog post explaining "regenerative medicine" in reassuring language.

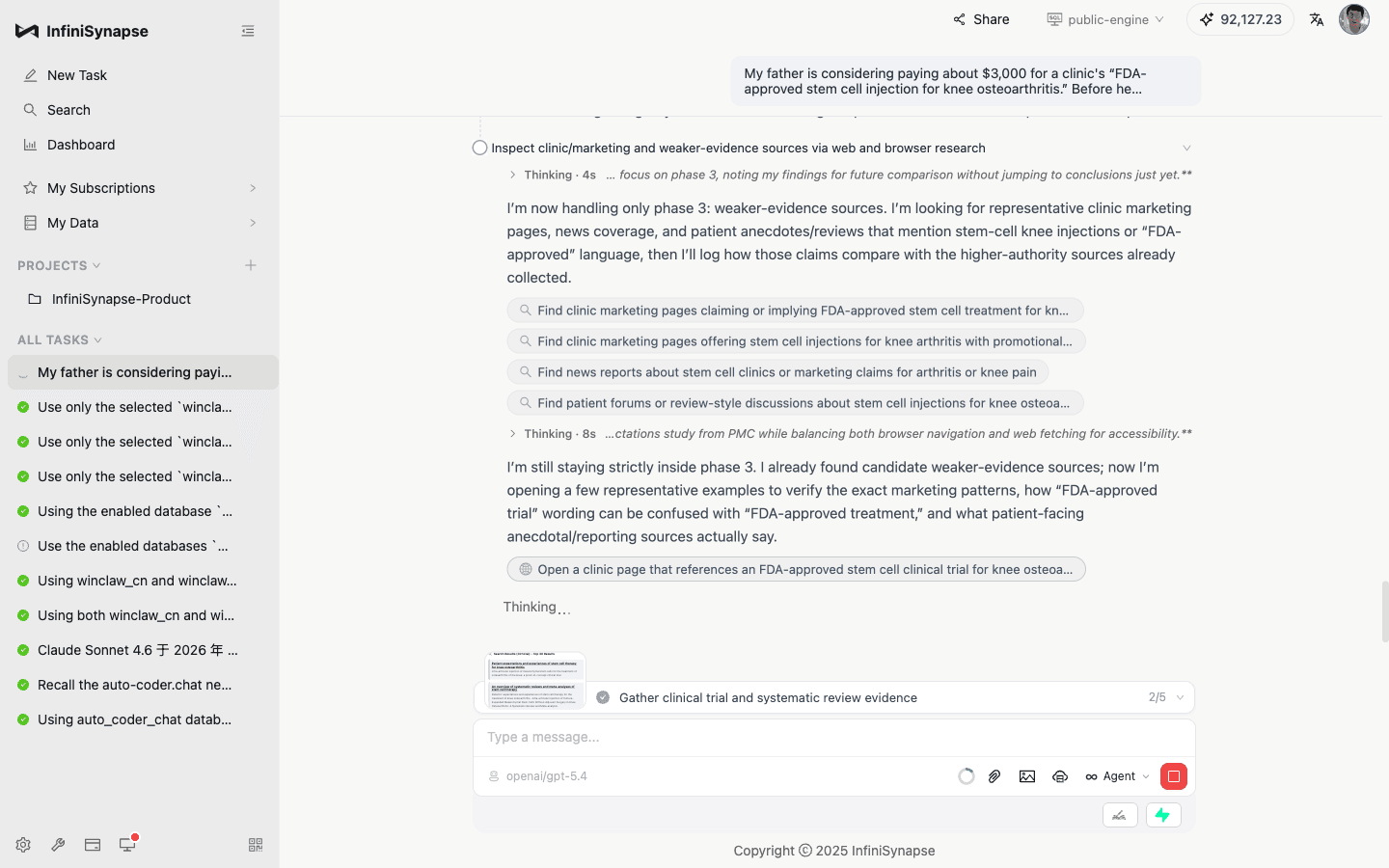

It started by building a five-stage plan.

The order was the important part. First came FDA, FTC, ClinicalTrials.gov, PubMed, and major orthopedic and rheumatology guideline sources. Then came clinical trials and systematic reviews. Only after that did it inspect clinic marketing pages, news-style articles, forums, and testimonials.

That sounds almost too obvious when written down. In practice, it is exactly where most ordinary internet research breaks.

We tend to start with the page that made the claim. The page is designed to persuade us. Then we look for confirmation or rebuttal while already carrying the emotional frame of the ad.

InfiniSynapse did the opposite. It established the strongest reference points first, then used weaker sources mainly to understand how the claim was being sold.

The Phrase "FDA-Approved" Started to Fall Apart

During the first phase, InfiniSynapse created a research notes file and began writing down verified statements as it worked.

The FDA finding was the center of the whole investigation.

The FDA's consumer alert says regenerative medicine products such as stem cells and exosomes have not been approved for orthopedic conditions such as osteoarthritis or knee pain. The same FDA page says the stem cell products currently approved in the United States are blood-forming stem cells from umbilical cord blood for disorders affecting blood production, not knee osteoarthritis.

That changed the question immediately.

It was no longer enough to ask whether stem cells are "promising" or whether a few patients report improvement. The real question became more precise:

If the FDA says this category is not approved for orthopedic conditions, what exactly does the clinic mean by "FDA-approved"?

There is a huge difference between an FDA-authorized clinical trial and an FDA-approved treatment. The words sound close enough that a patient can easily miss the gap. For a clinic selling a $3,000 procedure, that gap is everything.

It Did Not Overcorrect Into a Simple "Scam" Answer

The second phase moved into clinical trials, PubMed, systematic reviews, and Cochrane evidence.

This is where I expected the answer to become either too harsh or too soft.

Too harsh would be: "All stem cell therapy is fake."

Too soft would be: "There are clinical trials, so it may be worth trying."

InfiniSynapse stayed in the middle, which is where the actual evidence seems to live.

It treated ClinicalTrials.gov listings as evidence that something is being studied, not evidence that a marketed treatment is approved. It treated small trials as signals, not final answers. It treated systematic reviews as more useful than individual positive studies, while still noting that the underlying trials vary a lot: different cell sources, different processing methods, different doses, short follow-up, small samples, and uncertain long-term safety.

The practical conclusion was sober:

Some symptom improvement may be possible in some settings. Routine paid use as an established, FDA-approved knee osteoarthritis treatment is not supported by the strongest sources.

That is a much more useful answer than a slogan.

Then It Looked at the Marketing

Only after the regulatory and clinical evidence base was in place did InfiniSynapse look at clinic pages, news reports, patient pages, and testimonials.

This order changed how those pages read.

If you start with testimonials, they feel powerful. Someone says they can walk again. Someone else says the pain disappeared. A clinic says "regeneration," "advanced biologics," and "FDA-approved clinical trial." The story has faces, emotion, and hope.

After reading the FDA and clinical evidence first, the same pages look different.

InfiniSynapse flagged the patterns that mattered: trial language that could be mistaken for product approval, claims about cartilage regeneration or reversing osteoarthritis, high cash-pay pricing, testimonials used as proof, vague descriptions of what is actually being injected, and no precise FDA-approved product name for the knee osteoarthritis indication.

It did not ignore patient stories. It just refused to let them outrank the sources that actually decide approval status and evidence quality.

That is the sort of restraint I want from a research agent.

The Most Persuasive Part Was Not the Final Answer

The final answer was useful. The visible work was more useful.

InfiniSynapse left about 60 concrete steps in the task trace: searches, browser reads, FDA checks, FTC checks, ClinicalTrials.gov pages, PubMed pulls, guideline lookups, note updates, and cross-validation.

Opening a tool row showed the actual step behind it.

That changes the feeling of the demo.

I do not have to say, "Trust the AI." I can point at the process and say, "Look at what it checked first. Look at what it treated as weaker evidence. Look at how it separated a trial listing from an approved treatment."

For a product like InfiniSynapse, this matters more than sounding clever. A serious answer should be inspectable.

The Recommendation Was Clear

After cross-validation, InfiniSynapse gave the answer I needed:

Do not book based on this claim.

Not because every stem-cell study is worthless. Not because no patient has ever reported improvement. The reason was narrower and stronger:

The phrase "FDA-approved stem cell injection for knee osteoarthritis" is not credible as stated. A clinical trial listing is not the same thing as an FDA-approved marketed treatment. Major guideline sources do not support routine paid stem-cell injections for knee osteoarthritis. Human evidence may suggest small symptom benefits in some settings, but cartilage regeneration and disease modification are not established. The safety picture is not settled enough to justify casual commercial certainty.

For a family decision about spending $3,000, that is the answer I wanted: clear enough to act on, careful enough not to overclaim.

What It Actually Did

The final report included a short process summary.

In plain English, InfiniSynapse did six things well.

It put official regulatory and guideline sources first. It opened and read primary pages instead of relying on snippets. It checked whether "clinical trial" language was being stretched into "approved treatment" language. It compared single studies with systematic reviews and meta-analyses. It looked at marketing pages and testimonials only after stronger evidence was already established. Then it checked whether the weaker sources overstated or conflicted with the stronger ones.

That is what "an expert by your side" should mean.

Not someone who sounds more confident than you. Someone who is more patient than you, more systematic than you, and less easily impressed by the first page that looks persuasive.

The Source Hierarchy Is the Story

The final answer began with a source hierarchy.

At the top were FDA, FTC, ClinicalTrials.gov, NIH/PubMed, and major professional guidelines. Then came Cochrane, systematic reviews, meta-analyses, and randomized trials. At the bottom were clinic marketing pages, news reports, forums, and testimonials.

That hierarchy is the product story.

The internet gives you pages.

InfiniSynapse gives you an evidence map.

Key Sources Used

The live run used these source families:

- FDA consumer alert on regenerative medicine products, including stem cells and exosomes

- FDA patient and consumer information about regenerative medicine therapies

- FTC consumer warning on stem-cell therapy claims

- FTC 2025 enforcement action involving deceptive joint-pain stem-cell marketing

- ClinicalTrials.gov study NCT03990805

- ClinicalTrials.gov study NCT01183728

- 2019 ACR/Arthritis Foundation osteoarthritis guideline on PubMed

- Cochrane evidence on stem-cell injections for knee osteoarthritis

Task link:

https://app.infinisynapse.com/tasks?taskId=d0b88d6d-ab5c-4ac7-887b-48bacbff0e04

The Part I Keep Thinking About

This demo saved one family conversation from becoming a guessing game.

But the larger point is not the $3,000. It is the feeling of being able to slow the internet down.

A clinic can sound official. A patient story can sound moving. A study title can sound scientific. A phrase like "FDA-approved" can make the whole thing feel already settled.

InfiniSynapse did something simple and rare: it made the claim stand in line.

Regulator first. Guidelines next. Evidence after that. Marketing last.

That order is the product.